|

It seems like today's Project NExT panel on flipped classrooms was a big success. We had a lot of interest in the session, and the questions and discussion were really insightful and engaging. I'd like to thank Rachel Levy, Elizabeth Stepp, Jeremy Strayer, and Ron Taylor for donating their time and expertise as panelists! Also a big thanks to my co-organizers Brandy Doleshal, Stacy Hoehn, and Larissa Shroeder

0 Comments

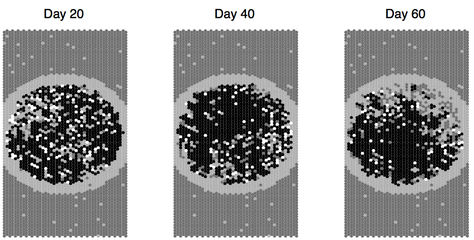

Katie Montovan, Tom Seeley, Laura Jones and I have just recently had a piece of work looking all cell allocation in honey bee comb accepted at the Journal of Theoretical Biology. I figured it would be a good time to give a run-down of the project. You might've heard that honey bees perform a waggle dance inside the hive to communicate the location of food sources to their sisters. What I didn't know at the start of this project is that honey bees also have a fairly sophisticated resource storage strategy. A global pattern of cell allocation emerges from the individual actions of many bees. Our project extends a model proposed by Scott Camazine and coauthors by presenting biologically relevant and realistic rules that individual bees could follow in order to both develop and maintain the observed pattern. Here I'll give a brief overview of the problem and our work. For a complete review of the literature, check out the arXiv preprint or the accepted version at JTB. Brian Storey, John Geddes and I just submitted a piece of work on spontaneous oscillations in a simple network containing two miscible fluids of differing viscosities. We catalogue spontaneous oscillations and numerically continue these behaviors through the high dimensional parameter space of the system. This technique allows us to construct interesting representations of the phase space that should be helpful in confirming our predictions. The preprint is located on arXiv.

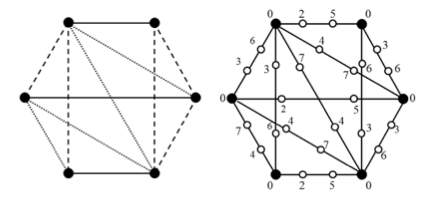

With summer here, I've had some time to square away some projects I've been working on. It's been great to reconnect with them after all the craziness that comes with the end of the semester. I'll devote a post to each of the projects I've been working on over the last little while. Denise Troxell, Sarah Adams and I advise a group of Olin undergraduates who work on discrete problems, usually centered on $L(d,1)$-labelings of graphs. This research stems from the frequency assignment problem in wireless communications: how do you assign frequency bands to spatially distributed transmitters such that transmitters that are in close proximity to one another use frequencies that are sufficiently spectrally separated to avoid interference? Mathematically, we consider the transmitters to be the vertices of a graph $G$, with vertices $x$ and $y$ adjacent if the associated transmitters are closer than some distance threshold. We can then think of a "good'' frequency assignment as a function $f : V(G) \rightarrow \{0,1,2,...,c\}$ such that $|f(x) - f(y)| \geq d$ if $x$ and $y$ are adjacent and $|f(x) - f(y)| \geq 1$ if $x$ and $y$ are at distance 2 in $G$. This is an $L(d,1)$-labeling of $G$. For a fixed graph $G$, the minimum value of $c$ over all $L(d,1)$-labelings is denoted $\lambda_d(G)$. A lot of work has been dedicated to determining $\lambda_d(G)$. The major open conjecture in the field was made by Griggs and Yeh and states that $\lambda_2(G) \leq \Delta^2$, where $\Delta$ is the maximum degree of $G$. Georges, Mauro, and Whittlesey show that determining $\lambda_d(G)$ is NP-Complete in general. This has motivated many researchers, including those in our group, to investigate classes of graphs that are of practical or theoretical interest. Our newest piece of work, just accepted to the Journal of Combinatorial Optimization, deals with so-called edge-path replacement graphs. Here we replace each edge of a graph $G$ with a path $P_k$ on $k$ vertices to form $G(P_k)$. This is a really interesting problem, because degree and distance play such large roles in $L(d,1)$-labelings. By adding these paths, we're in some sense increasing the distance between high degree vertices while at the same time preserving the maximum degree of the graph. Our solution to this problem involved decomposing $G$ using a 2-factorization, and then labeling each of the 2-factor separately using a specific scheme. The results prove a conjecture made by Lu: for any graph $G$ with $\Delta \geq 2$, $\lambda_2(G(P_4)) \leq \Delta + 2$. Ours are significantly more general: for any graph $G$ with $\Delta > 2$, $\lambda_d(G(P_k)) \leq d + \lceil \Delta /2 \rceil + \max ( \lceil \Delta / 2 \rceil, d) $ for any $d \geq 2$ and $k \geq 4$. The case with $\Delta \leq 2$ deals with graphs that are unions of paths and cycles, and the $L(d,1)$-labelings of these graphs are well understood. The case of $k = 3$ is the so-called total labeling of $G$ and is significantly harder. In some cases we can do a little bit better than the bounds I've quoted here.

All in all, the project was a great team effort. It's great to work with such bright and motivated undergrads. It looks like both Jessica and Jason are going to stick around next semester, so look for more great work to come from these two.  This semester Oscar Fernandez and I have been advising a few teams of Babson and Wellesley undergraduates doing mathematics consulting on projects business, industry and government. Oscar advised two teams working for Boston Scientific, and I advised a team working for BigBelly. I was lucky enough to have a fantastic team to work with. This allowed me to take a hands-off approach so that Sophia, Elize, Rachel, Marjorie and Sarika could really get a sense of ownership in the project. And while I can't be too specific on the details of the work they did, I'm very happy with where they ended up. It was great seeing them present the results from their hard work last week. Oscar and I are planning on continuing this program based on the early successes we had this semester. So if you're a Babson, Olin or Wellesley student reading this and are interested getting hands-on experience applying mathematics in a business or industrial context, shoot me an email. I've been meaning to write a post about research or teaching, both of which have been really heating up since spring break, but I haven't found the time. But I saw this article by Ben Orlin this morning and thought it was worth sharing right away. Small spoiler: Not understanding topology doesn’t make me stupid. It makes me bad at topology. That’s a difference worth remembering, whether you’re a math prodigy, a struggling student, or a teacher holding your students’ sense of self-worth in the palm of your hand. Failing at math ought to be like any failure, frustrating but ultimately instructive. In the end, I’m grateful for the experience. Just as therapists must undergo therapy as part of their training, no math teacher ought to set foot near human students until they’ve felt the sting of mathematical failure.

Dave crushed this thing in two parts, and since NPR's solution was along the lines of "count 'em up" and didn't give a formula for the case when you have $n$ people at the table, I figured I'd write up his solution.

Let $C_n$ be the number of ways to seat $n$ people at a circular table according to the restrictions laid out in the problem statement. One hard thing to deal with here is the fact that the table is circular. Dave's first good idea was to break the symmetry. Without loss of generality, choose 2 adjacent people at the table. Now, in each valid configuration, either these two people switch places or they don't, and, most importantly, the remaining people can be thought of sitting in a line rather than a circle. Each case defines a subproblem that is really tractable. We define a new and related problem: how many ways are there to reseat $n$ people seated in a line such that each person switches either with their left-hand or right-hand neighbor or remains in their original spot? Define $L_n$ to be the number of ways to seat $n$ people according to these restrictions. Noting $L_1 = 1$ and $L_2 = 2$, we can go forward with induction. Suppose $L_k$ is known for $k < n$ and consider $L_n$. In each valid seating arrangement, either the $n^{th}$ person switches with her neighbor or she does not. In the case where she does not, her neighbor is unconstrained by her presence, and so there are $L_{n-1}$ ways to reseat the remaining $n-1$ people. In the case where she and her neighbor switch positions, the remaining $n-2$ are free to sit however they like subject to the rules in the problem statement, and so there are $L_{n-2}$ ways to reseat them. Assembling our cases gives $L_n = L_{n-1} + L_{n-2}$. Note that this means solutions to this subproblem are Fibonacci numbers! (Our indexing here is off by one, so that $L_n = F_{n+1}$ for $n \geq 1$.) With this new result in hand, let's turn our attention back to the original problem. Recall that we arbitrarily chose a pair of adjacent people at the table. If the pair does not switch, then all $n$ people at the table are free to reorder themselves as if they were seated in a line. Thus, there are $L_n$ ways to reseat the table if the chose pair does not switch. If they do switch, the remaining $n-2$ people at the table are free to reorder themselves as if they were seated in a line, and so there are $L_{n-2}$ ways to reseat the table in this case. We also must account for the fact that the entire table can rotate either one seat to the right or one seat to the left. So the total number of ways to reseat the table is $C_n = L_n + L_{n-2} + 2$. With this new result in hand, let's turn our attention back to the original problem. Recall that we arbitrarily chose a pair of adjacent people at the table. If the pair does not switch, then all $n$ people at the table are free to reorder themselves as if they were seated in a line. Thus, there are $L_n$ ways to reseat the table if the chose pair does not switch. If they do switch, the remaining $n-2$ people at the table are free to reorder themselves as if they were seated in a line, and so there are $L_{n-2}$ ways to reseat the table in this case. We also must account for the fact that the entire table can rotate either one seat to the right or one seat to the left. So the total number of ways to reseat the table is $C_n = L_n + L_{n-2} + 2$. With a little massaging, we can get a statement for $C_n$ that is recursive. $$\begin{align*} C_n - C_{n-1} &= L_n + L_{n-2} + 2 - L_{n-1} - L_{n-3} - 2 \\ &= L_{n-2} + L_{n-4} \\ &= C_{n-2} - 2 \\ C_n &= C_{n-1} + C_{n-2} - 2. \end{align*}$$ For base cases, we have $C_3 = 6$ and $C_4 = 9$. Sure enough, $C_8 = L_8 + L_6 + 2 = F_9 + F_7 + 2 = 34 + 13 + 2 = 49$, just as NPR claimed. For a long list of values, see the OEIS page. Really nice proof, especially for one that Dave busted out while hiking. My brother Casey just had a paper accepted to Physics of Fluids. It's a great publication and a great journal, especially for his first time out of the gate. From the abstract: The paper describes a theoretical and experimental investigation of the laminar flow of two miscible Newtonian fluids of different density and viscosity through a simple network. The fluids stratify due to gravity and remain as nearly distinct phases with some mixing occurring only by diffusion. ... Once the phase separation at a single junction is known, a network model is developed which predicts multiple equilibria in the simplest of networks. The existence of multiple stable equilibria is confirmed experimentally and a criterion for existence is developed. I especially love that they confirmed these all these experimentally. And while the plots are nice and clean, I know how hard and long Casey worked to get all those data. Rock on, brother.

The work Casey and his coauthors did is closely related to some more theoretical stuff I've been hacking away at for the past year or so. Hopefully spring break next week will give us a chance to finalize and submit. It's midterm season! This semester I'm teaching a section of applied calculus and quantitative methods and a section of linear algebra. In both classes, I'm doing a take-home exam with an oral exam at the end. During the week-long take-home phase, students can use any resource they like, including books, notes, the internet, each other, etc. I encourage them to work together. I typically give out several versions of the exam, all with different data used in each example. This is to encourage the students to talk about the problems in general terms and to discourage them from just copying from one another.

After the take-home phase, each student comes in for a 15 minute oral exam. We go over each problem on the exam. If the work they've submitted looks correct, I'll ask the student to walk me through how they approached the problem. If it sounds like they understand the work they've submitted (and haven't just copied a classmate's work) I ask the student to define, explain or clarify a particular concept in the problem and connect it to the problem as a whole. If they can manage this, the real fun starts. I'll tweak one of the conditions in the problem and ask them how the change will affect their answer. I don't ask them to re-solve the problem on the spot typically; I just want to know if they really see all the dependencies and interrelations between the concepts. I grade each problem on a 4 point scale with 4 being phenomenal work, and 0 indicating they couldn't even explain how they approached the problem. This also roughly corresponds to the A - F letter scale. Being able to talk your way through how you approached a problem gets you one point. Being able to clearly define, explain or clarify a chosen concept gets you another; this is C level understanding. The "tweak" usually occurs in two parts, an easier version and a harder version. Correctly answering gets you one point each. There are obviously shades of grey in all these, and I typically award partial credit in 1/3 point increments to make the +/- letter scaling work out well. While I'm still working on the specifics, I love the take-home then oral exam model for a lot of reasons. I think it better simulates projects in the real world, both on time limits and collaboration. And with application-oriented students, connecting something to the business world gives you a ton of traction. One of my linear algebra students stated it really well (I'll paraphrase): "Taking a timed exam without resources or collaboration is like doing Mad Max mathematics." I couldn't agree more. Another reason I like the system is the fact that I get to see exactly what each student knows (and doesn't). I have a much better idea of where each of them is on their path to mastery than I used to. I've been genuinely surprised at the level of specificity we can resolve in 15 minutes. For instance, last semester during an oral exam I found out that a student understood everything about nonlinear optimization except evaluating endpoints of the domain. I'm not sure I would've got this level of understanding by looking at a printed exam. The last reason is that I can give much more challenging and interesting problems, because I can expect students to learn and teach each other over the course of the take home portion. My understanding so far of the student experience is that they're initially really nervous about the idea of an oral exam, which is new to most of them. But they eventually really warm up to the format. Last semester, I put the format of the final exam up to an anonymous vote in class: should it be a traditional exam of a take-home+oral exam? Over 90% of students (N=60) voted for the nontraditional format. I don't think I could get 90% of them to agree on almost anything, so I took this as confirmation that something about this model was working for them. For some examples, here's the midterm in applied calculus and the midterm in linear algebra. Notice in linear algebra I can use the midterm to preview things that we'll see more in depth later in the semester like multilinear and logistic regression. In calculus I can give them fairly gnarly The people over at Secret Blogging Seminar have a great post on a calculus-free proof of the spectral theorem. I have a group of (very) applied mathematical students, many of whom have never had a multivariate calculus course, so a proof like this will be great. From the post: Nonetheless, I am amazed that every textbook I have seen uses the "optimize a quadratic form on the unit ball" argument rather than this algebraic once. Lots of students don't remember multivariable calculus well, and existence of maxima of continuous functions on multidimensional bounded domains is complicated. Plus, I find a lot of students have trouble with an inductive process like getting one eigenvector and splitting off an orthogonal complement. |

|

Photo from ByoLogyc

RSS Feed

RSS Feed